ℹ️ Quick Answer: Maduro fake images created with AI tools like Midjourney and DALL-E 3 went viral within minutes of his January 2026 capture announcement. Public officials and accounts with millions of followers shared them as real. Only one authentic photo existed, posted by Trump himself. Here is how to tell the difference.

📋 WHAT’S INSIDE

- What Actually Happened With the Maduro Fake Images

- How the Maduro Fake Images Were Spotted

- Why Maduro Fake Images Matter to You

- How to Spot AI Fake Images (Quick Tips)

I have to admit, for a second there, they almost got me.

When former President Trump announced on Truth Social that Venezuelan President Nicolas Maduro had been captured, my social media feeds exploded. It was a chaotic mix of celebration, shock, and, as it turns out, a whole lot of fakery. The story wasn’t just the capture. Within minutes, a flood of Maduro fake images made it almost impossible to tell what was real.

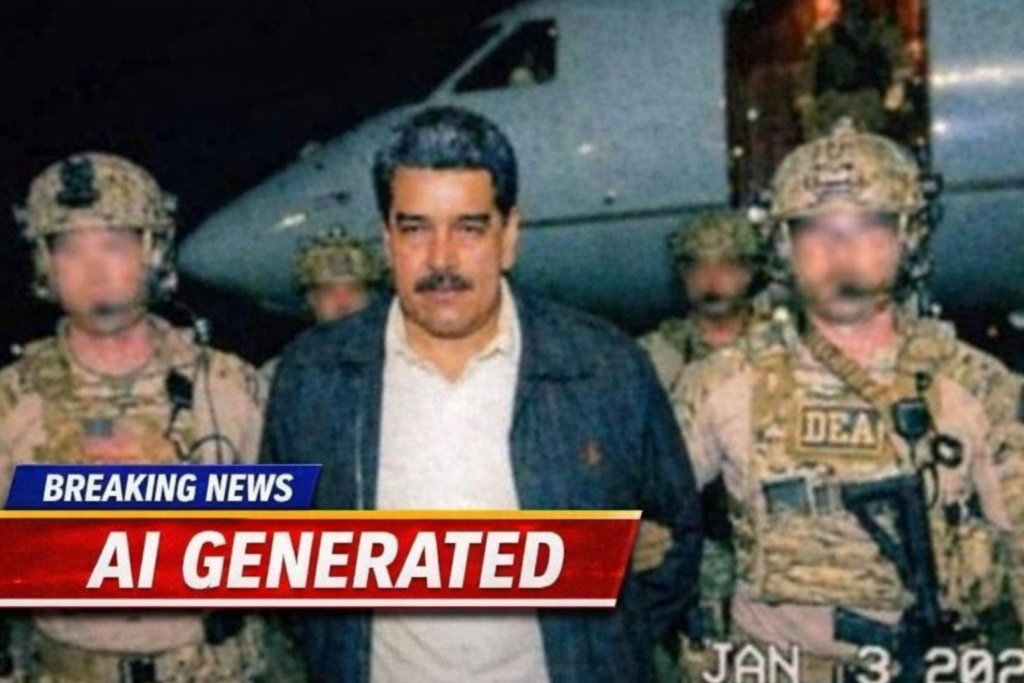

If you saw a picture of Maduro being hauled off a plane by DEA agents, you saw a fake.

What Actually Happened With the Maduro Fake Images

Within hours of the January 3, 2026 capture announcement, AI-generated photos of Maduro with DEA agents went viral, shared by Coral Gables Mayor Vince Lago and conservative accounts with over 6 million combined followers.

On January 3, 2026, the news broke. Immediately, AI-generated photos started going viral. You probably saw them. They showed dramatic scenes. Maduro with a defeated look, surrounded by tough-looking agents on an airfield. Another popular one showed him inside a military plane.

These weren’t just shared by random people. Coral Gables Mayor Vince Lago shared one. Conservative accounts with a combined following of over 6 million people pushed the images out to their massive audiences.

One viral image was traced back to an X account that literally described itself as an “AI video art enthusiast.” Someone even recycled an old photo of Saddam Hussein’s capture from 2003 and tried to pass it off as Maduro.

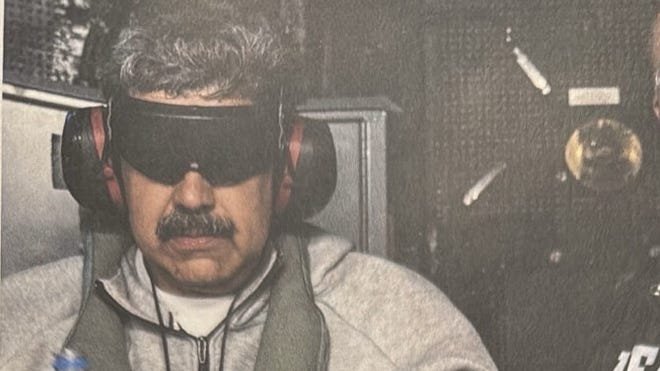

The only real photo came from Trump himself. Maduro in a Nike tracksuit, looking disheveled on the deck of the USS Iwo Jima. Everything else was digital smoke and mirrors.

How the Maduro Fake Images Were Spotted

Google DeepMind’s SynthID watermark tool flagged many of the fakes as AI-generated, while human viewers identified classic tells like waxy skin, six-fingered hands, and inconsistent shadow directions.

So how did we figure it out so fast? A couple of ways.

First, the tech way. Google has a tool called SynthID, built by Google DeepMind, which acts like a digital watermark for AI-generated content. It’s not perfect, but it was able to flag many of the most popular fakes, confirming they were created by an AI generator, not a camera.

Second, the good old-fashioned human eye test. The Maduro fake images had all the classic red flags of AI weirdness once you knew where to look.

- Lizard-person skin. The AI tends to make skin look unnaturally smooth and waxy.

- The six-fingered man. AI still struggles with hands. You’d see weirdly long fingers, extra digits, or hands that just seemed to melt into the background.

- Funky lighting. Shadows and light sources were often inconsistent from person to person in the same photo.

- Missing heads. In the background of some shots, you’d see bodies without heads or other strange anatomical glitches.

Why Maduro Fake Images Matter to You

AI-generated misinformation during breaking news events affects everyone, because the same techniques used to create fake political photos can fabricate disaster images, financial panic, and celebrity scandals.

You might be thinking, “Okay, so some fake political pictures went viral. What does that have to do with me?”

Everything.

This isn’t just about world leaders. This technology is here, and it’s being used to create confusion around every kind of major event. Imagine a hurricane, and fake pictures of collapsed bridges go viral, causing panic. Or a stock market dip, amplified by fake images of chaos on the trading floor.

The goal of this stuff isn’t always to push a specific political agenda. Sometimes, it’s just to create noise, get clicks, and make you feel like you can’t trust anything you see. When you can’t tell what’s real, it’s easier to just disengage. And that’s a problem for everyone.

How to Spot AI Fake Images (Quick Tips)

The four-step defense against AI fakes is to pause before sharing, check the source account, scan for visual tells (hands, eyes, text), and when in doubt, don’t amplify.

I’m not telling you to become a digital forensics expert. But we all have to get a little smarter about what we see and share.

- Just Pause. Before you hit share, take a breath. Emotional, shocking images are designed to make you react instantly. Don’t.

- Check the Source. Did this photo come from a reputable news agency like AP or Reuters, or from a random account? It matters.

- Look for the Weird Stuff. Do a quick scan. Do the hands look right? Is the lighting a bit off? Does everyone look like they were dipped in wax?

- When in Doubt, Don’t Share. You’re not obligated to amplify everything that crosses your screen.

Want to go deeper? Check out our complete guide on How to Spot AI Fake Images: 5 Red Flags Anyone Can Find.

What we saw with the Maduro fake images wasn’t the first time this has happened, and it won’t be the last. In 2026, seeing isn’t always believing. The best defense is a healthy pause before you share.

Related reading: How to Spot AI Fake Images: 5 Red Flags Anyone Can Find | AI Misinformation: How Grok Falsely Named World Leaders | New to AI? Start here

Leave a Reply