Is AI making us dumber? I’ve been thinking about this question a lot lately, mostly because I’ve noticed something uncomfortable about my own brain.

Last week, I was helping a friend debug some code. Simple stuff. The kind of thing I used to knock out in minutes. But I found myself reaching for Claude before I’d even tried to solve it myself. And when I finally did try to write the solution from memory? My fingers hovered over the keyboard like I’d forgotten how to type.

The syntax I used to know cold? Gone. Muscle memory that took years to build? Fuzzy at best.

Sound familiar? If you’ve been using ChatGPT, Claude, or any AI assistant regularly, you might be nodding right now. And according to new research from MIT, we’re not imagining things.

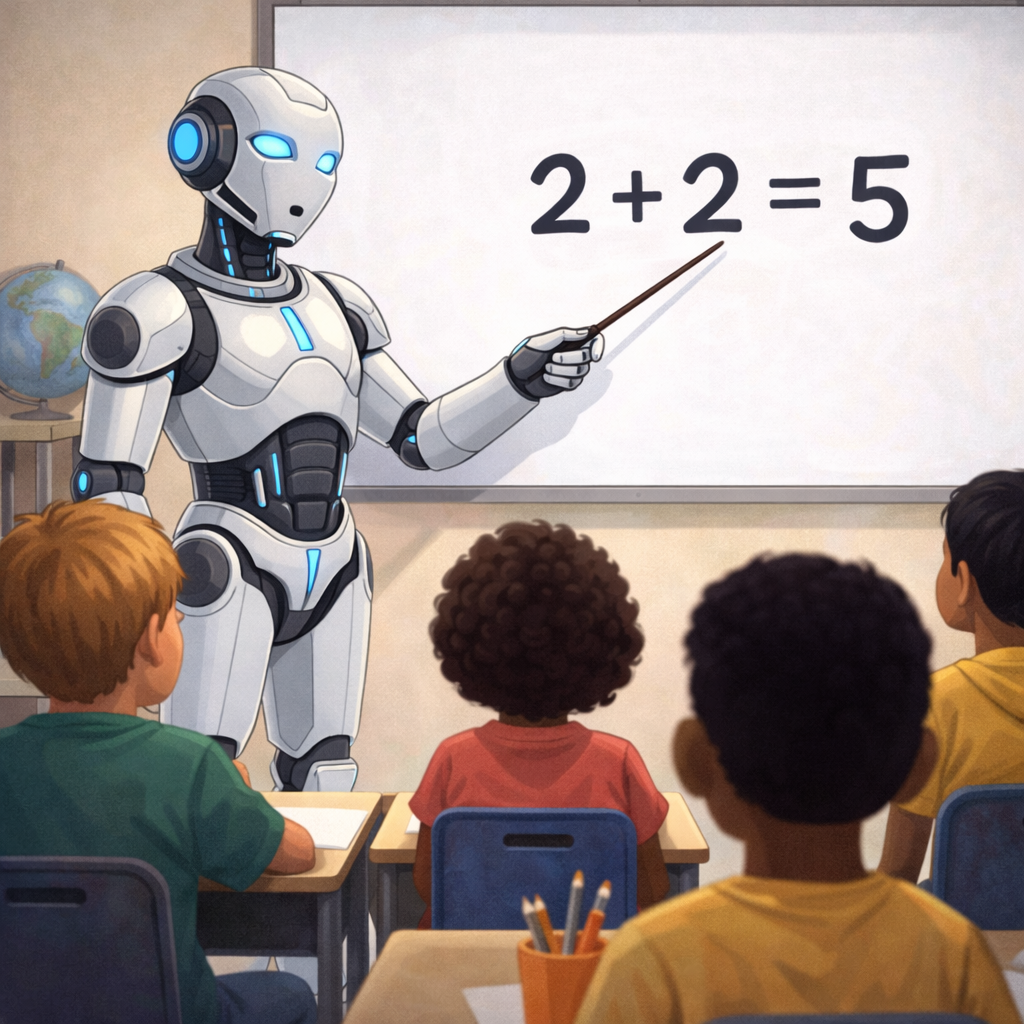

What the MIT Study Actually Found About AI and Your Brain

MIT Media Lab researchers found that heavy ChatGPT users showed 55% less brain connectivity in critical thinking regions, and 83% of participants couldn’t recall arguments from essays they’d written with AI assistance.

Researchers at MIT’s Media Lab published findings that stopped me mid-scroll. They studied people who regularly use ChatGPT and found something striking. Heavy users showed 55% less brain connectivity in regions associated with critical thinking and problem-solving.

The MIT study also found that 83% of participants couldn’t recall arguments from essays they’d written with AI assistance. The ideas existed on screen but never made it into long-term memory.

The correlation between AI reliance and critical thinking decline was significant, with r = -0.68. In research terms, that’s not subtle. That’s a flashing neon sign.

Is AI Making Us Dumber, or Is This Just… Normal?

Every major cognitive tool from calculators to GPS to Google Search has degraded specific skills while freeing up mental bandwidth for other tasks, and AI follows the same pattern at a much larger scale.

I want to pump the brakes on the panic here. Because we’ve been here before.

Remember when calculators were going to destroy our ability to do math? Researchers in the 1980s warned that students would lose basic arithmetic skills. And honestly? They were right. I can’t do long division in my head anymore. Neither can you, probably.

But we don’t consider ourselves “dumber” for it. We just… use calculators.

The same thing happened with GPS. A 2020 study from University College London found that people who rely heavily on navigation apps have measurably worse spatial memory. We literally can’t find our way around our own neighborhoods without Google Maps anymore.

And then there’s the “Google Effect,” documented by Columbia University psychologist Betsy Sparrow back in 2011. Researchers found that when we know information is easily searchable, our brains simply don’t bother storing it. Why memorize facts when you can just look them up?

The Cognitive Offloading Problem

Cognitive offloading frees up mental bandwidth when applied to routine tasks, but Microsoft Research and Carnegie Mellon University studies both show that outsourcing reasoning itself to AI causes measurable skill decline and dependency.

Scientists call this “cognitive offloading,” and it’s not inherently bad. Your brain has limited bandwidth. Outsourcing routine mental tasks frees up capacity for more complex thinking. At least, that’s the theory.

The problem is when we offload too much. When we stop exercising mental muscles entirely, they atrophy. A Microsoft Research study found that workers using AI assistants for writing tasks showed declining performance when the AI was removed. They’d become dependent.

Carnegie Mellon University researchers observed something similar. Students who used AI for homework performed worse on subsequent tests. The learning never happened because the AI did the thinking.

My Personal Experiment: Where AI Made Me Better

Working alongside Anthropic’s Claude as a writing collaborator improved my grammar and sentence structure over time, because using AI as a teacher rather than a replacement builds real skills instead of eroding them.

This is where the narrative gets complicated. While my coding syntax has gotten rusty, something else happened that I didn’t expect.

My writing got better.

Not because AI writes for me. But because working alongside Claude has been like having a patient editor looking over my shoulder. Every time it suggests a cleaner sentence structure, I learn something. Every time it catches a grammatical quirk I’ve been doing wrong for years, I internalize the correction.

I used to struggle with comma splices. Didn’t even know what they were, honestly. Now I catch them myself before AI even flags them. The training wheels became actual skills.

This tracks with research from Harvard Business School showing that AI can enhance human learning when used as a teaching tool rather than a replacement. The difference is in how you use it.

The Skills We’re Actually Losing (And Why It Might Be Okay)

Syntax recall, mental math, and navigation skills are declining among heavy AI users, but the industry shift toward AI-augmented work with tools like GitHub Copilot and Cursor means the skill that matters now is directing AI effectively, not memorizing code.

My syntax recall has declined, but I’m okay with it. Here’s why.

The entire tech industry is moving toward AI-augmented development. GitHub Copilot, Cursor, Claude Code for coding. Fighting this trend would be like insisting on hand-calculating spreadsheets in the Excel era. The skill that matters now isn’t memorizing syntax. It’s knowing what to build and how to direct the AI to build it well.

But I recognize this is a privilege. I have decades of foundational knowledge. I know why code works, even if I’ve forgotten the exact incantation. Someone learning to code entirely through AI might never build that foundation.

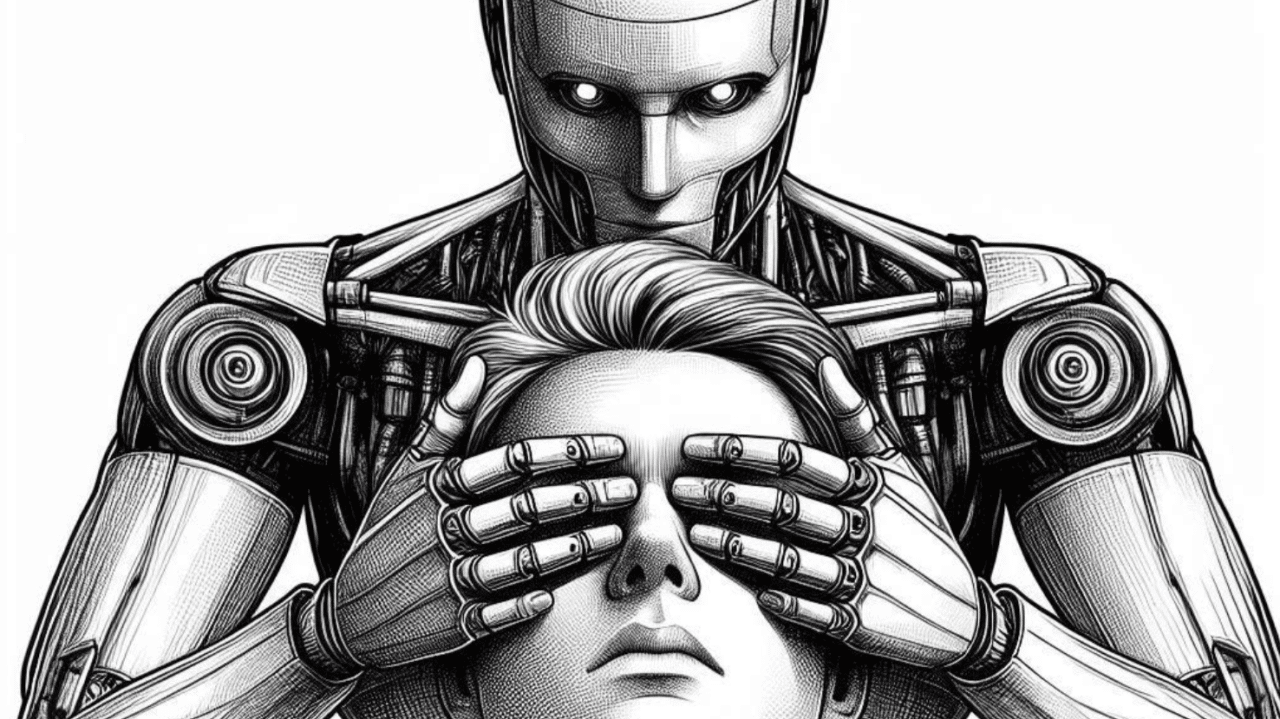

What the Research Says We Should Actually Worry About

The MIT study’s most alarming finding isn’t skill atrophy but reduced critical thinking. Outsourcing judgment and reasoning to ChatGPT, Claude, or Gemini skips the cognitive work that builds evaluative frameworks, unlike calculators or GPS which only handle mechanical tasks.

The MIT study points to something more concerning than skill atrophy. Reduced critical thinking. When we outsource not just tasks but judgment, we stop developing the mental frameworks that help us evaluate information.

Consider this. If you ask AI to summarize an article, analyze a problem, or form an opinion, you’re skipping the cognitive work that builds those capabilities. You get the output without the exercise.

This is different from calculators or GPS. Those tools handle mechanical tasks. AI handles reasoning. And reasoning is the thing that makes us, well, us.

A Balanced Approach: Using AI Without Losing Yourself

The most effective strategy is a four-part framework. Attempt problems yourself for 10 minutes first, use AI as a teacher not a replacement, maintain AI-free zones for journaling and creative work, and regularly test yourself on skills you want to keep sharp.

After reading through the research and examining my own experience, here’s what I’m trying to do differently.

1. Attempt first, then augment. Before asking AI for help, I spend at least 10 minutes trying to solve problems myself. Even if I fail, the struggle builds neural pathways.

2. Use AI as a teacher, not a replacement. When Claude suggests a better approach, I ask it to explain why. Then I try to apply that principle myself next time.

3. Maintain “AI-free” zones. Some tasks I do entirely manually. Journaling, first drafts of creative writing, mental math when the stakes are low. It’s like going to the gym for my brain.

4. Stay aware of atrophy. I regularly test myself on skills I care about keeping sharp. If I notice decline, I dial back the AI assistance in that area.

Books Worth Reading on This Topic

Three books that go deep on how technology reshapes cognition. Nicholas Carr’s “The Shallows” predicted many of AI’s effects on attention, Daniel Kahneman’s “Thinking, Fast and Slow” explains the System 1/System 2 thinking that AI disrupts, and Cal Newport’s “Deep Work” offers practical strategies for protecting focused thinking.

If you want to go deeper on the science of how technology shapes our thinking, these are worth your time.

The Shallows: What the Internet Is Doing to Our Brains by Nicholas Carr. The classic exploration of how digital tools reshape cognition. Written before ChatGPT but eerily prescient.

Thinking, Fast and Slow by Daniel Kahneman. Essential reading on how we think and where our cognition can be hijacked. Understanding System 1 vs System 2 thinking helps you use AI more intentionally.

Deep Work by Cal Newport. A practical guide to protecting and developing the cognitive skills that AI can’t easily replicate.

The Bottom Line: Your Brain Isn’t Broken, It’s Adapting

Is AI making us dumber? The honest answer is it depends on how you use it.

Used as a crutch that does your thinking for you? Yes, your cognitive abilities will decline. The research is clear on this.

Used as a tool that amplifies your existing capabilities and teaches you new ones? You might actually get sharper. My grammar certainly did.

The question isn’t whether to use AI. That ship has sailed. The question is whether you’re directing the AI or letting it direct you.

Common Questions About AI and Cognitive Decline

Is AI making us dumber or just changing how we think?

Both, honestly. We’re losing some skills (mental math, navigation, rote memorization) while potentially gaining others (information synthesis, prompt engineering, working with AI). Whether this is “dumber” depends on what you value. The MIT research suggests critical thinking specifically is at risk, which is worth taking seriously.

Should I stop using AI tools to protect my brain?

That’s probably overkill. The research suggests the issue is over-reliance, not use itself. A more practical approach is to use AI as a collaborator rather than a replacement, and maintain some cognitive “exercise” by doing mentally challenging tasks without AI assistance regularly.

Are younger people more at risk since they’re growing up with AI?

This is a real concern that researchers are watching closely. Adults who learned skills before AI have a foundation to fall back on. Someone who learns exclusively through AI assistance might never build that foundation. It’s similar to concerns about kids using calculators before learning arithmetic.

What skills should I actively protect from AI dependency?

Focus on protecting critical thinking, creative problem-solving, writing your own first drafts, forming opinions before seeking AI input, and any domain-specific expertise that matters to your career. These are the capabilities that atrophy fastest when outsourced.

Want to learn how to use AI tools thoughtfully without losing your edge? Check out our Start Here guide for practical approaches to AI in everyday life.

Related reading: AI Guides for Beginners | Latest AI News | New to AI? Start here

Disclosure: This post contains affiliate links. If you purchase through these links, I may earn a small commission at no extra cost to you. I only recommend books I genuinely think are worth reading.

Leave a Reply