ℹ️ Quick Answer: Anthropic refused to let the Pentagon use Claude for mass domestic surveillance and fully autonomous weapons. The federal government banned them from all contracts and labeled them a supply chain risk. Hours later, OpenAI signed the deal Anthropic walked away from. Then Claude shot to number one on the App Store as users voted with their downloads.

What’s Inside

- What Happened Between Anthropic and the Pentagon

- The Two Lines Anthropic Refused to Cross

- OpenAI Signed the Deal Hours Later

- The Public Voted with Their Downloads

- Why the Anthropic Government Ban Matters If You Use AI Every Day

- Common Questions About the Anthropic Government Ban

I don’t pretend to understand defense contracts or government procurement. The whole Anthropic government ban story is outside my wheelhouse, but when an AI company walks away from $200 million because of two principles it will not bend on, that catches my attention.

There’s an old saying. If you don’t stand for something, you’ll fall for anything. Anthropic just showed us what they stand for.

Full disclosure. I use Claude in my everyday life, so I’m not a neutral party here. But the facts of this story speak for themselves.

What Happened Between Anthropic and the Pentagon

Anthropic had a $200 million contract to deploy Claude on the Pentagon’s classified networks. The Defense Department demanded the company remove two usage restrictions. Anthropic refused, and the federal government banned them from all government work.

Here’s the timeline. Anthropic was the first AI lab to get its models running across the Department of Defense’s classified network. The deal was worth up to $200 million, but in February 2026, Defense Secretary Pete Hegseth gave Anthropic CEO Dario Amodei an ultimatum. Remove the restrictions on how the military can use Claude, or lose everything.

Amodei didn’t blink. On February 26, he told the Pentagon that Anthropic “cannot in good conscience accede to their request.” The next day, the President ordered all federal agencies to stop using Anthropic products. Hegseth went a step further, labeling Anthropic a “supply chain risk.” That’s a designation normally reserved for companies connected to foreign adversaries, not American AI labs based in San Francisco.

Federal agencies now have six months to phase out Claude entirely. This isn’t the first time Anthropic has taken a firm stand, either. The company recently caught three Chinese AI labs copying Claude through 16 million unauthorized exchanges and went public with the findings.

The Two Lines Anthropic Refused to Cross

Anthropic drew two red lines. No mass surveillance of American citizens and no fully autonomous weapons systems. Those were the only restrictions the Pentagon demanded they remove.

Amodei laid it out plainly in a CBS News interview. “Frontier AI systems are simply not reliable enough to power fully autonomous weapons,” he said. On surveillance, his position was just as direct. “Mass domestic surveillance is incompatible with democratic values.”

Anthropic tried to find middle ground. The company said it “tried in good faith” to reach an agreement and supported “all lawful uses of AI for national security aside from the two narrow exceptions.” Amodei even pointed out that these restrictions “have not affected a single government mission to date.”

None of that mattered. The Pentagon wanted unrestricted access across all lawful use cases. Anthropic said no.

When asked how the company would survive losing its government business, Amodei didn’t flinch. “Not only will Anthropic survive it, we’re also going to be fine.” The company also announced it would challenge the supply chain risk designation in court, calling it “legally unsound” and a “dangerous precedent for any American company that negotiates with the government.”

OpenAI Signed the Deal Hours Later

Within hours of Anthropic’s ban, OpenAI CEO Sam Altman announced a new Pentagon deal to deploy ChatGPT on classified networks. It was a direct replacement for Claude.

The timing was impossible to ignore. On a Friday evening, just hours after the ban, Altman posted that OpenAI had “reached an agreement with the Department of War to deploy our models in their classified network.”

Altman claimed OpenAI’s deal included similar safety protections. “Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems,” he wrote.

Altman also admitted the deal was “definitely rushed” and that “the optics don’t look good.” On X, he said OpenAI “really wanted to de-escalate things” and believed the deal offered was acceptable.

Earlier that same week, Altman had publicly supported Anthropic’s position. The whiplash was not lost on people.

The Public Voted with Their Downloads

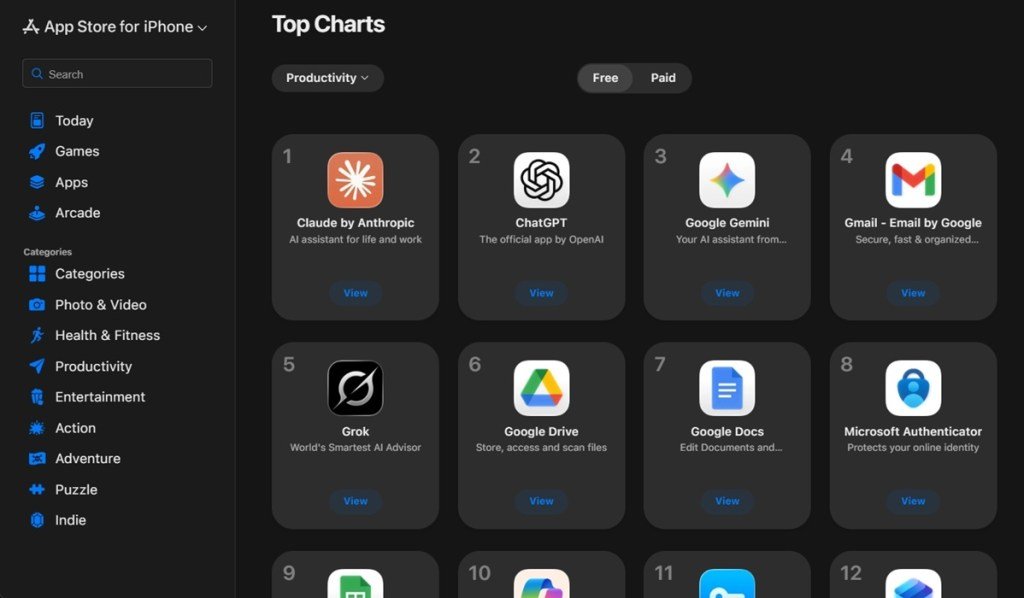

After the ban, Claude surged to number one on Apple’s App Store. ChatGPT users started leaving in visible, vocal numbers.

This is where the story gets interesting for anyone who uses AI tools every day. The Saturday after Anthropic got banned, Claude became the number one free app in America’s App Store. Not just number one in productivity or a subcategory. The top downloaded app in the entire store.

A “Cancel ChatGPT” movement exploded across Reddit. One post urging people to delete ChatGPT racked up 30,000 upvotes. On Reddit’s ChatGPT subreddit, users posted screenshots of deleting their accounts and switching to Claude.

More than 700 Google and OpenAI employees signed an open letter urging their own companies to stand with Anthropic’s position on autonomous weapons and surveillance. A viral photo showed chalk art outside Anthropic’s San Francisco offices reading “you give us courage.”

The numbers tell the rest. Anthropic’s daily signups tripled. The company’s user base grew over 60% since January 2026. Claude had already been on an upward run, with the recent Sonnet 4.6 release bringing top tier performance at a fraction of the cost. This surge was about something different. People weren’t switching because of benchmarks. They were switching because of principles.

Why the Anthropic Government Ban Matters If You Use AI Every Day

The company behind your AI assistant has principles that shape what the tool will and won’t do. Picking an AI tool means picking those principles too.

You might think a government contract dispute has nothing to do with your Tuesday afternoon ChatGPT session. Think about it differently though.

When you type a question into Claude or ChatGPT or Gemini, the company behind that tool has already decided what it will and won’t do with your data, your queries, and its technology. Anthropic just proved that its safety commitments aren’t marketing copy. They cost the company $200 million and a federal ban.

If you’ve been wondering whether the AI industry is heading for a bubble or building something real, pay attention to moments like this. February 2026 was the first time a major AI company lost a massive contract because it refused to compromise on two specific principles. And the public rewarded them for it.

Whether you use Claude, ChatGPT, Gemini, Perplexity, or something else entirely, this moment changed the conversation about what we should expect from the companies building the AI tools we rely on every day.

Common Questions About the Anthropic Government Ban

What does the Anthropic government ban mean for regular Claude users?

The ban only affects federal government contracts. Claude is still available through Anthropic’s website, apps, and API for everyone else. Nothing changes for regular users.

Did Anthropic break any laws by refusing the Pentagon?

No. Anthropic was negotiating contract terms with the Department of Defense. They declined to remove two specific restrictions on mass surveillance and autonomous weapons. The government responded by canceling the contract and banning Anthropic from future government work.

Should I switch from ChatGPT to Claude because of this?

That depends on what you value. Both tools work well for everyday use. If a company’s ethics and how they handle pressure from powerful institutions matters to you, this story is worth weighing when you pick your AI assistant.

What happens to government agencies already using Claude?

They have six months to phase out all Anthropic products and transition to alternatives. OpenAI has already signed a deal to replace Claude on the Pentagon’s classified networks.

Sometimes the most telling thing about a company is what it walks away from. Anthropic walked away from $200 million and a seat at the Pentagon’s table. The public responded by making Claude the most downloaded app in America. Whether that changes anything long term remains to be seen. But for now, it’s a solid reminder that the values behind your AI tools matter just as much as the tools themselves.

Related reading: Anthropic Catches DeepSeek Copying Claude 16 Million Times | Claude Sonnet 4.6 Brings Opus Level Performance at One Fifth the Price | New to AI? Start here

Leave a Reply